Archive

Fun with Grove Words and Cipher Wheels

What is a Grove word? The answer is a little fuzzy, but simplistically a Grove word is a VMS word that begins with one of the gallows glyphs. These words are often page or paragraph initial. Emma May Smith has a good explanation in her recent blog entry.

Mr. Grove observed the peculiar feature that some words beginning with a gallows glyph are also valid words if you remove the gallows glyph. For example, the word EVA kodaiin starts with gallows k, and odaiin is also a valid word.

It turns out that if you look at all words in the VMS, 46% of them have this property: remove the first glyph and you are left with a valid VMS word. Compare this with English, where only around 8% of words produce valid words if you remove the first letter. Making up the 46% we have 38% from non-Grove words (i.e. non-gallows initial), and 8% from Grove words.

To round out the statistics, about 13% of all VMS words have an initial gallows glyph.

Consider the nine wheels above, where one of the wheels contains gallows glyphs, and the other wheels contain other glyphs. These wheels can be selected in 29 -1 i.e. 511 different ways, to make words of length between 1 and 9.

The probability of selecting wheel 3 as the first wheel for the word is about 12.5%. In other words, with these 9 wheels, 12.5% of the time we’d create a gallows-initial “Grove” word – very close to what we observe in the VMS (13%). In fact, this figure of 12.5% is independent of the number of cipher wheels: as long as there are at least three wheels and they are used left to right, and the gallows glyphs fully occupy the third wheel, then 12.5% of the generated words will be Grove types.

As a corollary, it’s clear that for Grove types generated with the wheels, removing the first glyph will produce a valid word, as it is equivalent to generating a word starting at wheel 4 or later.

So what of the 54% of VMS words that are non-Grove, i.e. removing the initial glyph does not produce a valid VMS word? This can be explained if the number of different words used and written in the VMS is simply less than the total number of possible words that the author’s wheels can produce. What is the expected vocabulary size if we know there are 7,552 words written in the VMS (Takeshi), and we are missing 54% of them? It is simply 1.54 x 7,552 = 11,630 words, or thereabouts.

(Aside: the wheels above could just as easily be represented and used as a table with nine columns.)

In summary, “Grove” words (gallows initial) are ~13% of all words in the Voynich manuscript, and this fraction is what you’d expect if the text was produced using cipher wheels.

Word Length Distributions

In the previous blog post, we looked at the distribution of word lengths in the EVA transcription, and compared it with the binomial distribution for 9, as per the work of Stolfi. They matched well enough, as I had denoted EVA ch, sh, ain, aiin and qo as single glyphs, in a similar fashion as Stolfi:

For this page, we will define symbol as Currier did; i.e. EVA ch ans sh will be counted as single symbols, and so are EVA cth, ckh, etc..

https://www.ic.unicamp.br/~stolfi/voynich/00-12-21-word-length-distr/

i.e. he reduced some of the EVA glyph sequences to single symbols.

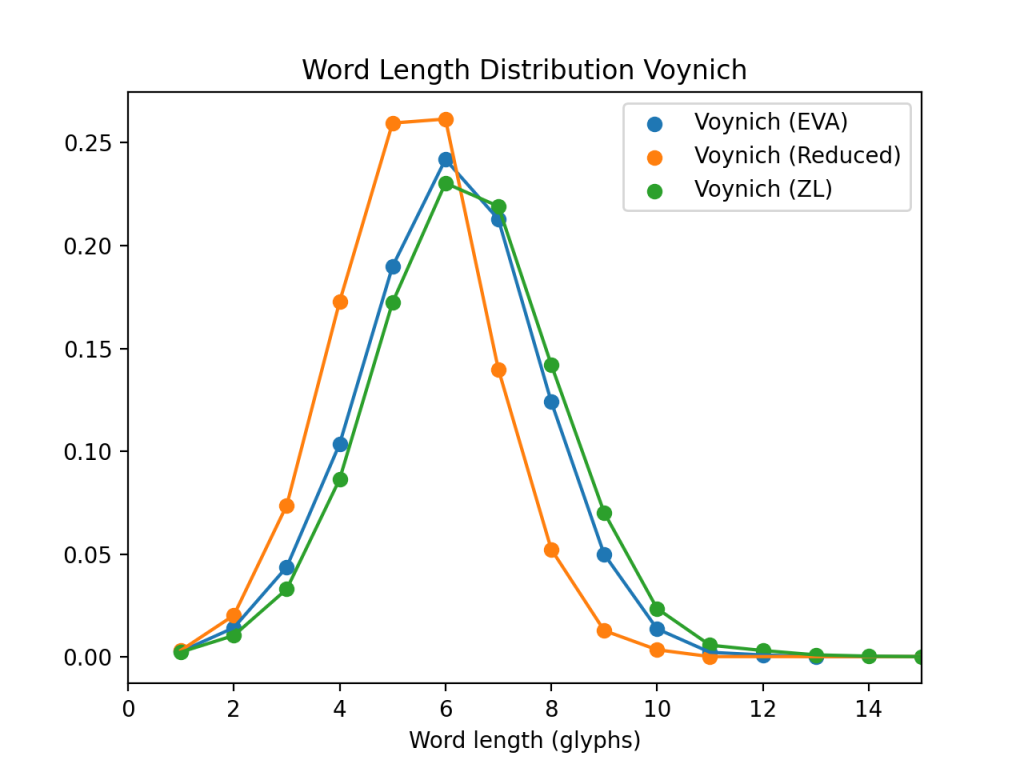

Without making these reductions, so leaving the EVA transcription unchanged, the distributions of course tend to higher values. As a check of my sanity, Marco Ponzi was kind enough to send me a list of VMS words he’d extracted from the ZL transcription, so that I could compare it with the words I extracted from the Takaheshi EVA. In the following plot I show the three word length distributions: EVA, ZL and the reduced EVA with ch, sh, ain, aiin and qo as single glyphs.

Reassuringly, the EVA and ZL (green and blue curves) match quite well, as they should, and the Reduced matches Stolfi’s result. (Curiously, the ZL transcription has a total of 8078 different words, compared with 7552 for Takaheshi EVA – which warrants further investigation.)

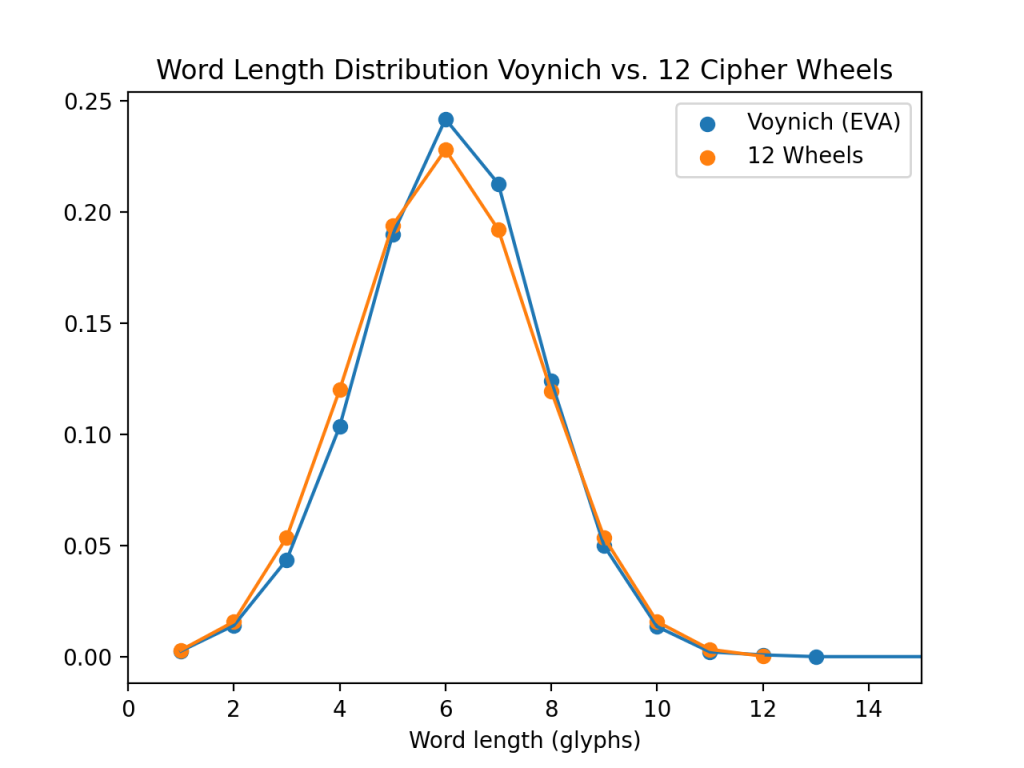

The EVA distribution now matches a binomial of (n=12,p=0.5), i.e. using 12 cipher wheels with a probability of 50% for a glyph being used from each wheel.

Nine Cipher Wheels

UPDATE (12 Aug 2021): the plots and results discussed in this post used a version of EVA that replaces some common glyph sequences by a single glyph, namely ch, sh, ain, aiin, and qo. Clearly, this tends to reduce the average word length. A later post will discuss the distributions obtained with words without this simplification.

The lengths of VMS words follow the binomial distribution for 9, as observed by Stolfi, and as discussed in Rene’s recent paper. This binomial distribution can be obtained from a set of 9 cipher wheels, where each wheel has a 50% chance of contributing one of its glyphs to the word being assembled, and the lengths of the resulting words plotted:

In the above plot, the orange line shows the distribution of word lengths from EVA, and the blue line shows the distribution of word lengths obtained by using the following set of 9 cipher wheels to generate a large number of random words:

With the cipher wheels shown, about 50% of the generated words will contain a gallows glyph, and this is, perhaps not coincidentally, the case in the VMS text, too.

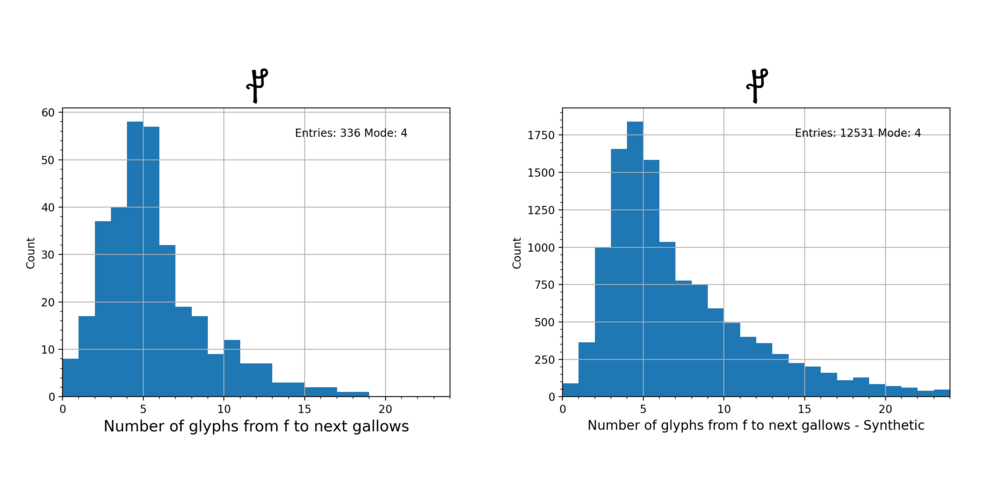

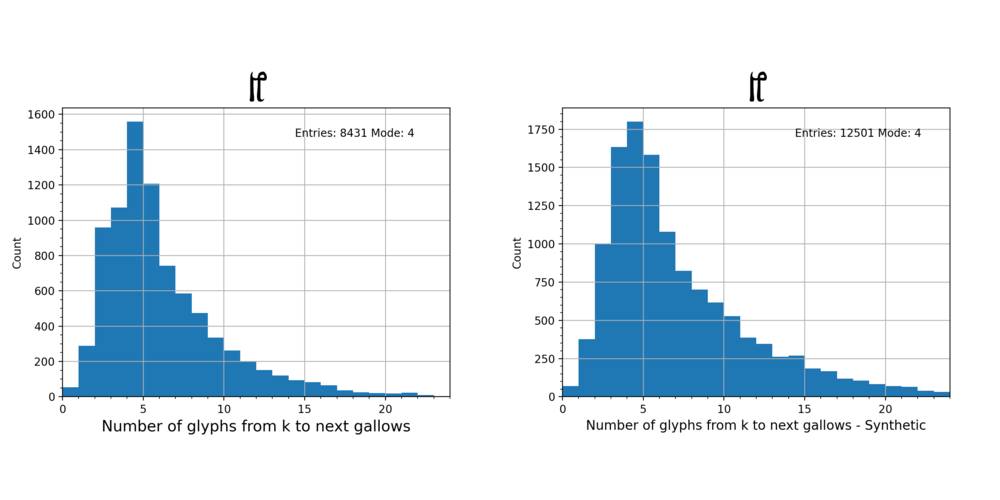

Using the same technique as applied in my earlier blog post, where I looked at the counts between gallows glyphs in the VMS text, we can look at the same distributions for words generated with the above wheels, assembled into lines of text, and ignoring spaces between words. The results are very similar, and shown below.

Here are the others:

Does the language of Dante fit the VMs?

Having spent many pleasurable hours checking various exotic cipher and code ideas, none of them remotely fits when using a GA, except one. My faith in the GA technique is that it very quickly gives an idea of how well a code/cipher theory fits the VMs text.

The one cipher idea and plaintext language that does notably better than all others is an nGram mapping with the language of Dante as the plaintext. This is a form of early Italian, and it produces results significantly better than all other languages tried with nGrams, including Latin, German, English, Spanish, Dutch, Chinese etc. .

I’ll post some results from this nGram/Dante GA later.

There is a significant obstacle with applying computational techniques to the VMs, and that is the machine transcriptions of the VMs text. Basically they differ substantially, to the extent that statistics obtained with, say, EVA do not match well with statistics obtained with, say, Voyn_101. A particular problem is glyph bloat … my opinion is that GC’s Voyn_101 transcription contains many more glyphs than the scribes were actually using. Little differences between the ways of writing “9″ for example, are classified as different glyphs. This plays havoc with statistical analysis. Thus I have a procedure that filters the Voyn_101 and remaps e.g. those multiple “9″ glyphs to the same glyph. This allows a smaller, more realistic, search space. But it still doesn’t address the question of what strokes make up a single glyph, which is often open to interpretation. Thus any nGram mapping procedure has to allow for at least 1-3 Grams in the Voynich to be reasonably sure of covering the glyph correspondences properly.

Here is an extract of the Dante Alighieri text that matches decently using nGrams to the VMs:

Cjant Prin

A metàt strada dal nustri lambicà

mi soj cjatàt ta un bosc cussì scur

chel troj just i no podevi pì cjatà.

A contàlu di nòuf a è propit dur:

stu post salvàdi al sgrifàva par dut

che al pensàighi al fa di nòuf timour!

Che colp amàr! Murì a lera puc pi brut!

Ma par tratà dal ben chiai cjatàt

i parlarài dal altri chiai jodùt.

I no saj propit coma chi soj entràt:

cun chel gran sùn che in chel moment i vèvi,

la strada justa i vèvi bandonàt.

Necuàrt che in riva in su i zèvi

propit la ca finiva la valàda

se tremaròla tal còu chi sintèvi

in alt jodùt iai la so spalàda

vistìda belzà dai rajs dal pianèta

cal mena i àltris dres pa la so strada.

(This is modified from a reply to Knox who commented on an earlier post.)

How about a “Verbose Homophonic cipher”?

I’ve had a bit of hiatus from the VMs, but it’s always popping up in my mind and niggling me, even when I haven’t got time to spend on it. The latest niggle was the idea that the VMs scribe used a set of simple tables that showed how to convert plaintext letters into codes. So, in an example table, letter “A” is written “4oh”, letter “B” is written “8am” and so on. Also, spaces in the plaintext have their own code. Veteran VMs researcher Philip Neal informed me that this is called a “verbose homophonic cipher”.

Elaborating on the idea: the scribe uses one of the set of tables for each folio s/he is writing. To encipher the plaintext onto the folio, it’s simply a matter of writing down the VMs “word” for each letter in the plaintext word. If there is more space on the line for the next plaintext word, the scribe writes down the code for space, and then the codes for the letters in the next word. Long spaces are written by writing the code for space more than once … The next line is used for the next word, and so on.

On the next folio, a different table may be used.

It’s hard to imagine the justification for such a scheme, but it does appear (at least initially) to fit some of the features of the VMs script (especially the repeating VMs words often seen).

I made a quick test that looks at VMs word frequencies on a single folio (in the Recipes section, which has the densest text). These showed a word frequency distribution that looks similar to the letter frequency distribution in Latin, apart from the most frequently occurring word (which is much more frequent) and which it is suggested would code for a space in the cipher.

However, on a typical folio, there are usually many more VMs words than there are plaintext letters. So the scheme has to be extended to allow the scribe a choice between several different VMs words to encode a single letter. Each table must have a set of words appearing in each plaintext letter column. Something like this:

| Plaintext | (space) | a | b | … |

| VMs words | 8am ay okoe | 4ohoe 2ay 1coe | faiis 4ay oka | … |

If this is indeed the scheme, one would expect to see patterns in the VMs word sequences that match patterns seen in the letter sequences of e.g. Latin words. Also, as Philip Neal pointed out, patterns like “word1 word2 word2 word1” would indicate a plaintext letter sequence of either “vowel consonant consonant vowel” or vice versa.

Looking through the whole of the VMs for sequence patterns (on the same line of text), I found the following:

- There are no 4 word sequences that repeat at all

- There are only four 3 word sequences that repeat, and each only twice

- There are no sequences at all of the form “xyyx”

(all of which I find rather surprising, and thought provoking).

So it looks like this hypothesis is dead in the water, and can be ticked off that long list of “things it might have been but in fact don’t fit”!

(It turns out that Elmar Vogt has been working on a related, but more sophisticated, idea which he describes on his blog and is called a “Stroke Theory”.)

The Cipher Black Box

This is how the cipher works: a plaintext is fed in to the “Black Box” and, based on a set of unknown parameters P, is enciphered by an unknown process in the box into the ciphertext, which is written onto the folio.

The internal mechanism of the Black Box is unknown. The only information we have is the output ciphertext (but we have a lot of it).

What strategies can we employ to discover how the internal mechanism of the Black Box works? What are the input parameters to the cipher? Can we avoid having to find out about the mechanism, and directly reverse the cipher, so yielding the plaintext from the ciphertext?

If we could “prod” the Box as enciphering was taking place (e.g. by twiddling one of the input parameters Pi), then by observing the output ciphertext, we could start to build a theory about how the cipher works.

Suppose we devise a candidate cipher that, from a sequence of random plaintext letters, and using a set of parameters we choose, generates a chosen line of VMs text exactly. Mathematically:

ciphertext = C(plaintext,P0…Pi)

where C is the cipher function. We can surely find a function that satisfies these conditions: some sort of State Machine is perhaps most appropriate. When twiddling one of the parameters Pi, and measuring the change in the ciphertext, we are able to calculate the partial derivative of C with respect to Pi:

δC/δPi = Δ(ciphertext)

If the variation on the ciphertext produces new ciphertext that is compatible with the rest of the ciphertext in the VMs, then this would suggest that Pi is a good parameter (i.e. that it is a candidate for retention in the candidate cipher). If, on the other hand, the variation produces invalid new ciphertext, the parameter is poor, and is a candidate for removal from the candidate cipher.

Potential algorithm:

- Create a state machine containing a cipher C that takes (many) Parameters P

- The state machine parameters are adjusted so that a (random) plaintext input string produces a valid sequence of VMs ciphertext

- Each parameter is varied slightly in value, and the state machine asked to reprocess the plaintext to produce new ciphertext

- The similarity of the new ciphertext is compared with the VMs corpus

- The parameter in question is demoted or promoted in weight

- Once the effects of all parameters have been examined they are graded by weight

- Good parameters are kept, poor ones discarded

- Develop an overall score for the state machine, based on a suitable metric

- Memorize this state machine if it has the best score so far

- Go to step 1, but now with the reduced set of parameters

This process is repeated for many different trial state machines, and the best memorized.

Inverting the Cipher

TBA

Strong’s Cipher

Strong’s “peculiar system of a double reversed arithmetic progression of a multiple alphabet” is a puzzling description, but GC recently (Feb 2010) explained it as “”double reversed arithmetic progression” as defined by the string 1-3-5-7-9-7-5-3-1-4-7-4″ (although I think the sequence given is an example, rather than the definition). The number of alphabets is “a handful”.

If we suppose that the cipher is indeed constructed like this, then can we crack it computationally?

First we need to make some assumptions. Let’s generously assume that the number of alphabets is 10. Let’s then assume that these alphabets are rotated through in a sequence that is 17 long (the number 17 is picked since it crops up as a feature of the VMs text in many places). Let’s not assume that the sequence is double, or reversed, or anything else: it’s just a sequence of alphabet numbers. Let’s assume that each alphabet contains 21 characters: abcdefghilmnopqrstuvx

We then take a sample of VMs text (I chose the first “paragraph” of f1v)

h1s9 1o8am oe oek1c9 1ay Fax ap 9kcc9 1ay oy o19 81o eho89 oho8ay 1o89 8o H9 HoH9 29 8h2ii9 K9 hok1o89 8ae 8oe 1ohco 8aiy 8ap so1c9 1o ho89

and, equipped with a large dictionary of Latin words, we start to build a possible cipher. To do this, we start by looking at the first VMs word “h1s9”, and pick a Latin word of the same length, at random: “acri”. With this pair we can start to construct the cipher table:

Voynich o 9 e 1 8 a h y c k 2 i K s m H p F x g & Alphabet 0 . . . . . . a . . . . . . . . . . . . . . Alphabet 1 . . . c . . . . . . . . . . . . . . . . . Alphabet 2 . . . . . . . . . . . . . r . . . . . . . Alphabet 3 . i . . . . . . . . . . . . . . . . . . . Alphabet 4 . . . . . . . . . . . . . . . . . . . . . Alphabet 5 . . . . . . . . . . . . . . . . . . . . . Alphabet 6 . . . . . . . . . . . . . . . . . . . . . Alphabet 7 . . . . . . . . . . . . . . . . . . . . . Alphabet 8 . . . . . . . . . . . . . . . . . . . . . Alphabet 9 . . . . . . . . . . . . . . . . . . . . .

We continue with the next word: “1o8am” and a random Latin word of the same length: “paveo”, and update the table:

Voynich o 9 e 1 8 a h y c k 2 i K s m H p F x g & Alphabet 0 . . . . . . a . . . . . . . . . . . . . . Alphabet 1 . . . c . . . . . . . . . . . . . . . . . Alphabet 2 . . . . . . . . . . . . . r . . . . . . . Alphabet 3 . i . . . . . . . . . . . . . . . . . . . Alphabet 4 . . . p . . . . . . . . . . . . . . . . . Alphabet 5 a . . . . . . . . . . . . . . . . . . . . Alphabet 6 . . . . v . . . . . . . . . . . . . . . . Alphabet 7 . . . . . e . . . . . . . . . . . . . . . Alphabet 8 . . . . . . . . . . . . . . o . . . . . . Alphabet 9 . . . . . . . . . . . . . . . . . . . . .

The next word is “eo” and the random Latin word is “do”. Now the Latin letter “o” has to be placed under the Voynich “o” column in Alphabet 0:

Voynich o 9 e 1 8 a h y c k 2 i K s m H p F x g & Alphabet 0 . . o . . . a . . . . . . . . . . . . . . Alphabet 1 . . . c . . . . . . . . . . . . . . . . . Alphabet 2 . . . . . . . . . . . . . r . . . . . . . Alphabet 3 . i . . . . . . . . . . . . . . . . . . . Alphabet 4 . . . p . . . . . . . . . . . . . . . . . Alphabet 5 a . . . . . . . . . . . . . . . . . . . . Alphabet 6 . . . . v . . . . . . . . . . . . . . . . Alphabet 7 . . . . . e . . . . . . . . . . . . . . . Alphabet 8 . . . . . . . . . . . . . . o . . . . . . Alphabet 9 d . . . . . . . . . . . . . . . . . . . .

We continue in this vein, picking random Latin words to match the VMs words, and attempting to place them into the cipher. This starts off easily, but rapidly becomes impossible, with the Latin words chosen: when we come to place a letter into the required column at the current alphabet in the sequence, we find that the position is already occupied by a different letter, or that the alphabet already contains that letter but in a different column.

In such cases we try to select a different Latin word to see if it will fit. If we exhaust all possible Latin words, then we backtrack to the beginning, and start afresh with a new sequence and new choices.

Most of the time, this algorithm doesn’t get further than a few words into the text before failing. Occasionally it gets quite a long way. Of course, the search space of possible Latin word combinations is staggering …

This is one of the more interesting attempts at deciphering f2v:

Voynich o 9 e 1 8 a h y c k 2 i K s m H p F x g & Alphabet 0 l . t a s . b o f . n . . . . . . . . . . Alphabet 1 m i . o l b . . r . . t . . . g . . . . . Alphabet 2 f a u e . . . s . . . i . n . . . . . . . Alphabet 3 . o . v f . i . . n u . . . . . . e . . . Alphabet 4 . a . h d i . . . . . . o . . . . . . . . Alphabet 5 e s . i t o . . . . . . . . . . . . a . . Alphabet 6 u . . . r s c . . . . . . . . . . . . . . Alphabet 7 u . i . n b . s r . . . . . . m t . . . . Alphabet 8 e i . . . d u . . c . . . . a . . . . . . Alphabet 9 s . . u . . . . . n . . . . . a . . . . . Sequence vals = 0 1 2 3 4 5 6 7 8 9 0 1 2 3 5 7 8

h1s9 1o8am oe oek1c9 1ay Fax ap 9kcc9 1ay oy o19 81o eho89 oho8ay 1o89 8o H9 HoH9 29 8h2ii9 K9 hok1o89 8ae 8oe 1ohco 8aiy 8ap so1c9 1o ho89 bono herba st muniri abs eia st infra vos eo meo diu iussi fiendo offa tu mi alga us nuntio os cuculla