Archive

Fun with Grove Words and Cipher Wheels

What is a Grove word? The answer is a little fuzzy, but simplistically a Grove word is a VMS word that begins with one of the gallows glyphs. These words are often page or paragraph initial. Emma May Smith has a good explanation in her recent blog entry.

Mr. Grove observed the peculiar feature that some words beginning with a gallows glyph are also valid words if you remove the gallows glyph. For example, the word EVA kodaiin starts with gallows k, and odaiin is also a valid word.

It turns out that if you look at all words in the VMS, 46% of them have this property: remove the first glyph and you are left with a valid VMS word. Compare this with English, where only around 8% of words produce valid words if you remove the first letter. Making up the 46% we have 38% from non-Grove words (i.e. non-gallows initial), and 8% from Grove words.

To round out the statistics, about 13% of all VMS words have an initial gallows glyph.

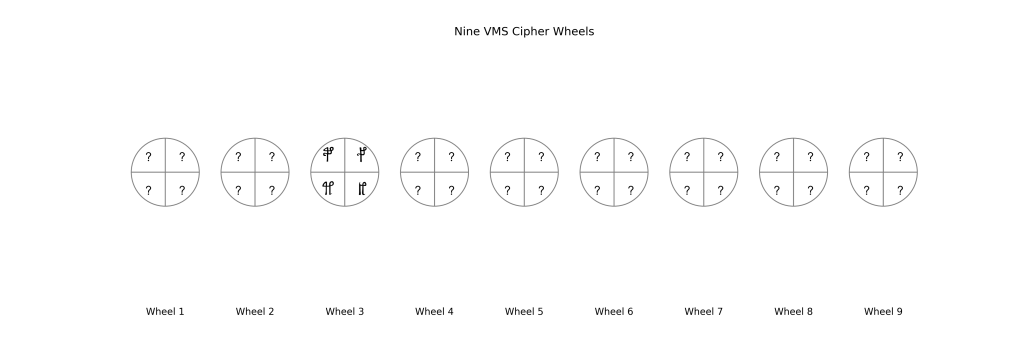

Consider the nine wheels above, where one of the wheels contains gallows glyphs, and the other wheels contain other glyphs. These wheels can be selected in 29 -1 i.e. 511 different ways, to make words of length between 1 and 9.

The probability of selecting wheel 3 as the first wheel for the word is about 12.5%. In other words, with these 9 wheels, 12.5% of the time we’d create a gallows-initial “Grove” word – very close to what we observe in the VMS (13%). In fact, this figure of 12.5% is independent of the number of cipher wheels: as long as there are at least three wheels and they are used left to right, and the gallows glyphs fully occupy the third wheel, then 12.5% of the generated words will be Grove types.

As a corollary, it’s clear that for Grove types generated with the wheels, removing the first glyph will produce a valid word, as it is equivalent to generating a word starting at wheel 4 or later.

So what of the 54% of VMS words that are non-Grove, i.e. removing the initial glyph does not produce a valid VMS word? This can be explained if the number of different words used and written in the VMS is simply less than the total number of possible words that the author’s wheels can produce. What is the expected vocabulary size if we know there are 7,552 words written in the VMS (Takeshi), and we are missing 54% of them? It is simply 1.54 x 7,552 = 11,630 words, or thereabouts.

(Aside: the wheels above could just as easily be represented and used as a table with nine columns.)

In summary, “Grove” words (gallows initial) are ~13% of all words in the Voynich manuscript, and this fraction is what you’d expect if the text was produced using cipher wheels.

Entropy of the Voynich text

The Shannon Entropy of a string of text measures the information content of the text. For text that is completely random i.e. where the appearance of any character is as likely as the appearance of any other, the entropy (or “disorder”) is high. For a text which is a long string of identical characters, for example, the entropy is low.

Mathematically, the Shannon Entropy is defined as:

Entropy = –ΣiN probi * Log( probi)

where probi is the frequency of the i’th character in the text, and the sum is over all the characters.

If the Voynich text is randomly created (by whatever means), we’d expect it to have high entropy (i.e. be very disordered). What we in fact find is that the text is ordered, with low Entropy, and is rather more ordered than English, for example. The result of comparing the Voynich text with several other texts in different languages is shown in the table below.

| Language | Source | Entropy |

|---|---|---|

| Voynich | GC’s Transcription | 3.73 |

| French | Text from 1367 | 3.97 |

| Latin | Cantus Planus | 4.05 |

| Spanish | Medina 1543 | 4.09 |

| German | Kochbuch 1553 | 4.15 |

| English | Thomas Hardy | 4.21 |

| Early Italian | Divine Comedy 1300 | 4.23 |

| None | Random characters | 6.01 |

The last entry in the table shows the Entropy for a random text – and is getting on for double the Entropy of the Voynich.

Landini’s Challenge

An excerpt from Landini’s challenge text (text he generated using an undisclosed method, supposed to replicate the features of the VMs text):

qopchdy chckhy daiin ¬ ½shxam chor otechar okcharain ryly sheodykeyl

sheodykeyl daiin shd okaiin qokain qokal yteoldy otedy qokydy opchedy

otal oldar chor lkeedol eer ol dair chedy daiin ockhdar cpheol chedy

xar qokaiin y chedy kshdy ololdy aiin char y okeey oldar qokaiin lsho

daiin olsheam qoeey chedy dchos pshedaiin shedy d qol key sheol or

cpheeedol qokedy qokaiin daiin cthosy chedy ar aiir chedy teeol aiin

cheey y cheam oky qokaiin daldaiin loiii¯ ar shtchy chedy aldaiin

ydchedy daiin shd okaiin qokain daiin qotcho chedy daiin lchy olorol

otedy qockhor shol daiin paichy chedy ar shdair chedal chedy kchdaldy

chckhy otakar qokedy s qooko chor daiin otcholchy chedy daiin koroiin

qokain qokedy kosholdy ol kchedy kshdy qokaiin ar shaikhy olaldy seees

ar oteodar chedy oteeol shedy daiin key dain daiin keeokechy chedy

lchey ail lchedy sches ol dsheeo otol odaiin qokain daiin sheeod chshy

chedy qoekedy tair sain qocheey aiin cheey chaiin ols shedy sheolol

daiin lcheol chedy daiin pchoraiin oshaiin chedy lchey lor sal aiin

cheey y dsheom shedy todydy cheor saiin shdaldy daiin ofchtar daiin

Here are some thought-provoking results from analysing the text, as suggested by Knox, the VM text, and comparisons with English, Latin, German, French and Spanish. These use a new form of the Genetic Algorithm, described below.

Summary

It looks to me like that Landini either generated his text from a transcription of the VM itself, or his algorithm for generating that text is a good emulation of the encoding process used in the VM. In other words the Landini “language” is a good candidate as a plaintext language for the VM, as opposed to the European languages tested.

Results

Here is a table which shows the GA’s efficiency at converting/translating between Voynich, Landini, and the other languages.

(In the table, the best possible score is 1.0 – see below for an explanation)

Asking the GA to translate English to English, or Latin to Latin, etc. results in a high efficiency score, as expected. Note that the Landini to Landini efficiency is 0.97 – almost perfect.

The GA performs moderately at converting between the languages and the Landini text. But what is most striking (to me) is the good efficiency for converting Voynich to Landini (0.74) and Landini to Voynich (0.89)

Some Notes on the table

To look at this I revised my GA code so that it was more flexible, and I jettisoned the use of separate dictionaries. Here is how the GA now functions. It can convert/translate between any language text samples.

1) Two text files are read in: the “source” text, and the “target” text. This could be, for example, a source file containing Landini’s text, and a target file containing Spanish text, if we want to convert from Landini to Spanish.

2) The text in each file is processed separately, producing two word lists, and two sets of n-Gram frequency tables.

3) The chromosomes are generated with random mappings between the source n-Grams and the target n-Grams

4) The GA evolves the chromosomes by trying to maximise their cost. The difference now is that when a target word is generated from the source text using the mappings, it is looked up in the target word list created in 2) above, rather than in a separate dictionary.

5) After training, the best chromosome can have a maximum cost value of 1.0, which would correspond to a perfect conversion between the source text and the target text (i.e. every word produced from the source text is found in the target text dictionary)

6) So we can feed the GA with two identical texts, and after training the score of the best chromosome should be 1.0, and indeed it approaches that (it doesn’t quite get there because only the top 100 n-Grams are translated, and so some characters in the source text cannot be translated).

7) The word and n-Gram frequency lists are made from the entirety of each text, but (for this exploratory study) the training takes place on only the first 50 “words” in the source text, and uses only the first 100 n-Grams for mapping. Thus if the 50 words of Voynich chosen contain several rare characters, then for those the mapping will fail because those rare characters do not appear in the n-Gram list, and this will result in a lower score.

8) In all cases the “X->X” score in the table (i.e. the diagonal) represents the best score possible for that language, and is a normalisation for the other numbers in the table. I should really revise the table and divide out the off-diagonal scores by the diagonal normalisations.

9) An improvement would be to configure the n-Gram list to be, say, 200 long, and use more source (Voynich) words for the training. The downside of this is mainly execution speed.

10) These runs were with n-Grams up to 3: it would be better to go to 4 at least.

11) I think Landini gets good scores because the character set he uses is very small. Knox comments ” A factor must be that the Landini Challenge has built-in frequency matches to any transcription of the VMs. Also, there is no meaningful correspondence in the letter sequence of one word to another in Landini. The difficulty fits what I said the VMs may be.”

Voynich Herbs

Edith Sherwood has a web site where she details compelling possible identifications for the plants depicted in the “herbal” pages of the VM.

Dana Scott’s page also has plausible identifications for the plants.

As has often been pointed out, if we look at the first Voynich “word” that appears on each page of the herbal part of the VM, we find that those words are unique, or appear elsewhere very rarely. It thus seems reasonable that the words may be the names of the plants depicted.

The GA was set up to find a set of n-Gram mappings that would convert a list of 111 Voynich first herbal words into Latin/English or Spanish. For this, dictionaries of Latin, English and Spanish herb/plant names were used.

The GA sought a mapping that would convert all the Voynich words for herbs/plants into as many valid plaintext (Spanish, English, Latin) words as possible. The best result was for a mixed English/Latin dictionary (see table): 31 of the 111 Voynich words were converted, about 30% success rate.

(One should never expect 100% success, due to missing names in the dictionary, transcription errors, missing n-Grams, incomplete n-Grams etc..)

The results are shown below in tabular form, together with Dana Scott’s and Edith Sherwood’s identification. The first column shows the folio in the VM, the second shows the first Voynich word on that folio. For the GA identification columns (3 and 4) the Voynich mapped word is shown, in quotation marks if not found in the associated dictionary, and in bold if found in the dictionary.

Note that, probably unsurprisingly, nowhere do the IDs from the GA in Spanish, English/Latin and Scott/Sherwood, agree! NOT YET, anyway 🙂

(What amuses me about about this mapping technique is that it tends to produce words that sound plausible in the target language. E.g. for f4r the Latin/English word “paptise” sounds like a valid word.)